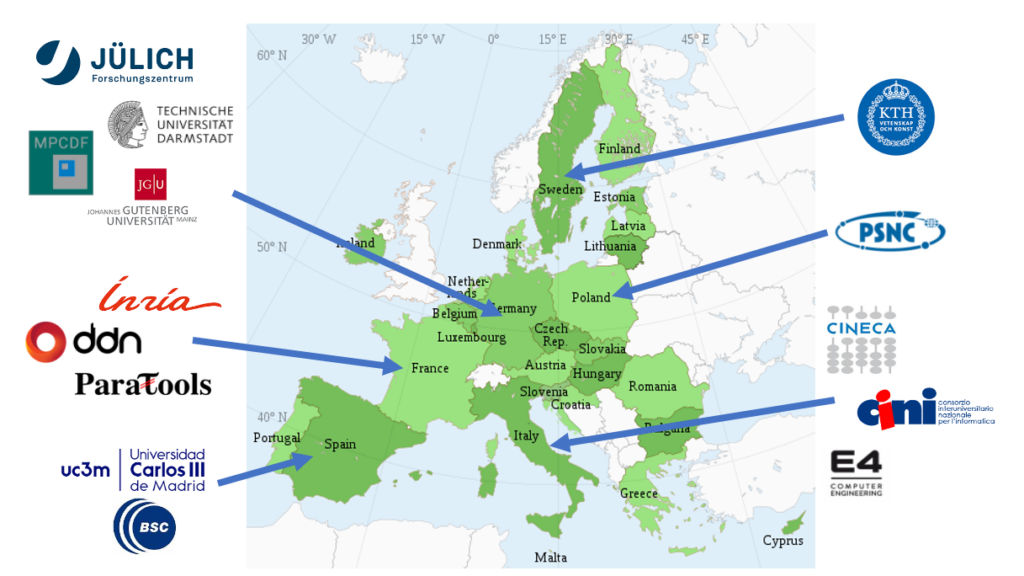

UNIVERSIDAD CARLOS III DE MADRID (UC3M)

Founded in 1989, University Carlos III of Madrid (UC3M) is a public university characterized by its strong international focus, the quality of its faculty, excellence in research, and commitment to society. Despite being a young university, its achievements have placed it among the top universities in Spain. UC3M was one of the top five Spanish universities selected in 2009 for the Spanish Campus of International Excellence program. In 2009, UC3M was the fourth Spanish University in participation in the EU Framework Programme. UC3M is listed in the QS World University ranking in position 280 universities in the world, in position 151-200 for Computer Science, and in position 20 in the top 50 universities under 50 years. UC3M is also listed in the top 200 Universities in Computer Science according to ARWU ranking. During FP7, UC3M participated in 72 funded projects, including 20 MCSA actions. In H2020, UC3M has already obtained 33 funded projects, as well as 5 ERC grants.

The Computer Architecture, Communication and Systems Group (ARCOS) is part of the School of Engineering. It was formed in 2001 and and has been able to attract as faculty members researchers from different universities from Spain and other countries as well as from the industry. ARCOS group focuses on research in parallel and distributed computing systems, and real-time embedded systems. ARCOS participated in the ComplexHPC COST Action (IC 804). The ARCOS group coordinated the recently finished FP7 REPARA project, and also coordinates the NESUS COST Action (IC 1304). Currently, the group participates in the H2020 project RePhrase. Besides that, ARCOS is also a member of international networks as the HIPEAC (European Network of High Performance and Embedded Architecture and Compilation) and ETP4HPC (European Technology Platform for High Performance Computing) and actively particpate in the ISO C++ standardization effort through ISO/IEC JTC1/SC22/WG21 (C++ standards committee) representing the Spanish National Body (UNE).

JOHANNES GUTENBERG-UNIVERSITAT MAINZ (JGU)

Johannes Gutenberg University Mainz (JGU) is one of the largest and most diverse universities in Germany. JGU hosts around 31,000 students from over 120 nations and combines all academic disciplines under one roof, including its University Medical Centre, the Mainz Academy of Fine Arts, the Mainz School of Music, and the Faculty of Translation Studies, Linguistics and Cultural Studies in Germersheim. In over 100 institutes and clinics, 4,400 academics, 570 of whom are professors, teach and carry out research. With 75 fields of study and more than 270 degree courses, JGU offers an extraordinarily broad range of courses. JGU is also home to four partner institutes involved in top-level non-university research: the Max Planck Institute for Chemistry (MPI-C), the Max Planck Institute for Polymer Research (MPI-P), the Helmholtz Institute Mainz (HIM) and the Institute of Molecular Biology (IMB). JGU’s core research areas are particle and hadron physics, the materials sciences and translational medicine. JGU’s success in Germany’s Excellence Strategy program has confirmed its academic status: In 2018, the research network PRISMA+(Precision Physics, Fundamental Interactions and Structure of Matter) was recognized as a cluster of excellence. Moreover, excellent placings in national and international rankings as well as numerous other honors and awards demonstrate just how successful Mainz-based researchers and academics are. JGU will participate in this project through the Zentrum fur Datenverarbeitung (ZDV), the university’s data and HPC center. ZDV is a scientific institution and a central IT service provider for all departments and institutions of the university and supports scientific computing as a full member of the German Gauss Alliance by operating the high-performance computers MOGON I and MOGON II, and also by providing computational scientists supporting users concerning all questions about high-performance computing (HPC) and the optimisation of HPC applications. Scientific contributions concerning the subjects of HPC and storage systems are developed in various projects sponsored by the DFG, the BMBF, industry or the EU, together with our partners worldwide.

BARCELONA SUPERCOMPUTING CENTER (BSC)

The Barcelona Supercomputing Center (BSC) was established in 2005 and is the Spanish national supercomputing facility and a hosting member of the PRACE distributed supercomputing infrastructure. The Center houses MareNostrum, one of the most powerful supercomputers in Europe. The mission of BSC is to research, develop and manage information technologies in order to facilitate scientific progress. BSC was a pioneer in combining HPC service provision, and R\&D into both computer and computational science (life, earth and engineering sciences) under one roof. The centre fosters multidisciplinary scientific collaboration and innovation and currently has over 400 staff from 41 countries. In 2011, BSC was one of only eight Spanish research centres recognized by the national government as a “Severo Ochoa Centre of Excellence”. BSC has collaborated with industry since its creation, and has participated in projects with companies such as ARM, Bull and Airbus as well as numerous SMEs. BSC also participates in various bilateral joint research centers with companies such as IBM, Microsoft, Intel, NVIDIA and Spanish oil company Repsol. The centre has been extremely active in the EC Framework Programmes and has participated in over one hundred projects funded by it. BSC is a founding member of HiPEAC, the ETP4HPC and participates in the most relevant international roadmapping and discussion forums and has strong links to Latin America.

TECHNISCHE UNIVERSITAT DARMSTADT (TUDA)

Technische Universitat Darmstadt is a leading research-oriented university in the Rhine-Main area of Germany with a strong focus on computer science and engineering. Since its foundation in 1877, TU Darmstadt has played its part in addressing the urgent issues of the future with pioneering achievements and outstanding research and teaching. TU Darmstadt focusses on selected, highly relevant problem areas. Technology is at the heart of all disciplines at TU Darmstadt. The natural sciences as well as social sciences and humanities cooperate closely with engineering. In order to expand its expertise strategically, TU Darmstadt maintains a variety of partnerships with companies and research institutions. It is a vital driving force in the economic and technological development of the Frankfurt-Rhine-Neckar metropolitan area. The Laboratory for Parallel Programming, which represents TU Darmstadt in this project, belongs to the university’s Department of Computer Science. It devises novel methods, tools, and algorithms to exploit massive parallelism on modern hardware architectures. It conducts research in five areas, namely (a) discovery of parallelism in sequential programs, (b) performance analysis of parallel programs, (c) scalable parallel algorithms, (d) dynamic management of supercomputer resources, and (e) deep neural networks. Most relevant for this project are research areas (b) and (d), through which the lab contributes expertise in automatic performance modelling and scheduling algorithms, respectively. The scheduling algorithms it has designed in prior work for evolving and malleable jobs will provide the basis for the malleability management. For this purpose, they will be extended to take I/O resources into account, leveraging another element of prior work aiming at the co-allocation of compute and storage resources on systems with tiered storage. The automatic performance modelling techniques it has created, in particular, the open-source performance-modelling tool Extra-P, will be used to model how I/O requirements change as jobs are shrunk or expanded.

DATADIRECT NETWORKS FRANCE (DDN)

For over 25 years, DataDirect Networks France (DDN) has designed, developed, deployed and optimized systems, software and solutions which enable enterprises, service providers, universities and government agencies to generate more value and accelerate time to insight from their data and information, on premise and in the cloud. DDN systems are now powering more the 70\% of the Top500 companies. With around 2/3 of its work force in R\&D DDN is a company leading innovation in the field of high performance storage. The past 3 years DDN Storage has established an R&D Center in Meudon, close to Paris, France. This facility is hosting the core development team of the Software Defined Storage group with more than 20 engineers, a large fraction of them holding a Ph.D. DDN is active in the European R\&D ecosystem with participation in the ETP4HPC, involvement in teaching HPC I/O in European Universities (Versailles, Trieste, Evry). DDN Storage is an associated member of the Energy oriented Center of Excellence (EoCoE) DDN Storage is owning several facilities with HW prototype in Europe, one in Paris, one in Dusseldorf. DDN Storage is an active open source actor as illustrated by its numerous contributions to the Lustre High Performance File System.

INSTITUT NATIONAL DE RECHERCHE ENINFORMATIQUE ET AUTOMATIQUE (INRIA)

The Institut National de Recherche en Informatique et Automatique (INRIA), the French research institute for digital sciences, promotes scientific excellence and technology transfer to maximise its impact. It employs 2,500 people. Its 200 agile project teams, generally with academic partners, involve more than 3,000 scientists in meeting the challenges of computer science and mathematics, often at the interface of other disciplines. Inria works with many companies and has assisted in the creation of over 160 startups. It strives to meet the challenges of the digital transformation of science, society and the economy.

PARATOOLS SAS (PARATOOLS SAS)

ParaTools is an HPC software development company specialised at is origin in performance profiling as indicated by its name. In 2014, ParaTools SAS opened in France as a spin-off of its mother company based in Eugene Oregon. This new office has since specialised itself in low-level and middleware system development for HPC programming models. In particulars, contributions were made to MPI runtimes, container orchestrators and support of next generation network cards. The company therefore has in its portfolio, state of the art performance tools (ParaTools is the sole distributor of the TAU performance system, and middleware technologies being actively developing both an MPI and an OpenMP implementation. Recently, building blocks for the horizontal scaling of MPI (combining services instead of processes) were developed around the notion of RPC (or active messages), both on Vanilla MPI and on MPI-enabled network cards (BULL BXI). This technology that we see as a good complement to latency oriented MPI is a key component for client-server HPC which is right now (mostly) limited to monolithic payloads.

FORSCHUNGSZENTRUM JULICH GMBH (FZJ)

Forschungszentrum Julich GmbH — a member of the Helmholtz Association — is one of the largest research centers in Europe. It pursues cutting-edge interdisciplinary research addressing the challenges facing society in the fields of health, energy and the environment, and information technologies. Within the Forschungszentrum, the Julich Supercomputing Centre (JSC) is one of the three national supercomputing centers in Germany as part of the Gauss Centre for Supercomputing (GCS). FZJ operates supercomputers, which are among the largest in Europe. FZJ has more than 30 years of expertise in providing supercomputer services to national and international user communities. It undertakes research and development in HPC architectures, performance analysis, HPC applications, Grid computing and networking. FZJ successfully managed numerous national and European projects including the PRACE Preparatory Phase project and multiple implementation phases. The FZJ key personnel participating are part of the joint research group “High Productivity Data Processing (HPDP)” at JSC and University of Iceland (that was ranked in 2019 as the 6th best worldwide in the field of Remote Sensing in the Shanghai Ranking’s Global Ranking of Academic Subjects). The group is highly active in developing parallel and scalable machine (deep) learning algorithms for remote sensing data processing and many other types of applications (i.e., medical research and retail sectors). By being located at JSC, HPDP can rely on HPC technologies with MPI, OpenMP and CUDA (with TensorFlow, Keras, pyTorch, Chainer, Horovod) but also on innovative quantum computing systems. The main backbone of the research group is the large number of PhD students that are jointly-supervised with the University of Iceland. Furthermore, the HPDP works actively with the Cross-Sectional Team Deep Learning (CST DL) at JSC. The CST deep learning conducts basic and applied research in the field of adaptive multi-layer neural network architectures that learn complex tasks from very large amount of raw, unprocessed data. The group’s special focus is on unsupervised deep learning and generative models to enable processing of unlabeled data, reinforcement deep learning for complex control task and control optimisation, multi-modal learning, transfer learning, multi-task learning, one-shot learning (learning from few examples only), learning to generalise from synthetic to real-world data, learning to learn (meta-learning: data-driven adaptation of learning procedure and network architecture), and active learning (active selection of data to be processed).

CONSORZIO INTERUNIVERSITARIO NAZIONALE PER L’INFORMATICA (CINI)

CINI, a consortium of 47 Italian public universities, is today the main point of reference for the national academic research in the fields of Computer Engineering, Computer Science, and Information Technologies. CINI aims at providing added value to the member Universities, the Italian production system, the Italian Public Administration, and the overall country, being the representative of the almost whole Italian Academic Informatics Community. It, in fact, involves 1,300+ professors of both Computer Science (Italian SSD INF/01) and Computer Engineering (Italian SSD ING-INF/05), belonging to 47 universities that do research in CS/CE, deliver MS/PhD degrees, and are public-funded. Established in1989, CINI is under the supervision of the competent Italian Ministry for University and Research. The Consortium is submitted to the periodic Quality Evaluation of its research activities by ANVUR, the Italian National Agency for the Evaluation of the University System and Research. CINI supports: Joint scientific activities of research and technological transfer, both primary and applicative, with Universities, Institutes of higher education, research institutions, industries, and Public Administrations; The access and the participation in projects and scientific activities of research; Technology transfer between Partner Universities and Public Administration, private and public companies and startups; The creation and development of national research Labs with strong connections with the territory; Customised higher education tracks. In all its activities, CINI ensures the highest quality at the national (and, where needed, international) level by relying on several academic excellencies, the critical mass necessary to achieve the targets, and the geographical distribution throughout the overall Italian country. CINI is currently equipped with 10 Thematic R\&D National Labs, distributed throughout the whole national territory, focused on Artificial Intelligence and Intelligent Systems (AIIS), Assistive Technologies (AsTech), Big Data, Cybersecurity, Embedded Systems \& Smart Manufacturing, Formal Methods and Algorithmics for Life Sciences (Infolife), ICT Skills, Training, and Certification (CFC), Informatica \& Società , ITEM – C. Savy, Smart Cities and Communities. A novel CINI working group on Key Technologies and Tools for HPC started in 2019.

CINECA CONSORZIO INTERUNIVERSITARIO (CINECA)

CINECA, established in 1969, is a non-profit consortium of 70 Italian Universities, 9 national research institution , and the Ministry of Education, University and Research (MIUR). Cineca is the largest Italian supercomputing centre with an HPC environment equipped with cutting-edge technology and highly-qualified personnel which cooperates with researchers in the use of the HPC infrastructure, in both the academic and industrial fields. Cineca’s mission is to enable the Italian and European research community to accelerate the scientific discovery using HPC resources in a profitable way, exploiting the newest technological advances in HPC, data management, storage systems, tools, services and expertise at large. On mandate of the Ministry of University and Research, Cineca represents Italy in PRACE, the Partnership for Advanced Computing in Europe (www.prace-ri.eu), a persistent pan-European Research Infrastructure (RI) providing leading HPC resources to enable world-class science and engineering for academia and industry in Europe. Cineca is one of the four PRACE Tier-0 Hosting Centres. Since June 2014. Cineca is one of the founding members of the European Technology Platform for HPC (ETP4HPC), an industry led forum providing a framework for stakeholders, to define research priorities and action plans on a number of technological areas where achieving EU growth, competitiveness and sustainability requires major research and technological advances in the medium to long term period. Moreover Cineca HPC Department is the Italian representative in the pan-European EUDAT Collaborative Data e-Infrastructure and core partner in Human Brain Project, the EU flagship project facing the big challenge of understanding the human brain. More recently Cineca has been selected as a hosting entity of one of the future EuroHPC precursor to Exascale European supercomputers. Besides the national scientific HPC facility Cineca manages and exploits the supercomputing facility of the Italian energy company (ENI). The HPC Department in Cineca has a long experience in cooperating with the researchers in parallelising, enabling and scaling-up their applications in different computational disciplines, covering condensed matter physics, astrophysics, geophysics, chemistry, earth sciences, engineering, CFD, mathematics, life sciences and bioinformatics, but also non-traditional ones, such as biomedicine, archaeology and data-analytics. Cineca has strong relationship with its own stakeholders and collaborates with the scientific communities to enable and develop new applications and tools to better address the challenges of the High-end HPC systems. Cineca has a wide experience in providing education and training in the different fields of parallel computing and computational sciences and is one of the six PRACE Advanced Training Centres (PATCs). Finally Cineca has a long history in co-design, deploy and manage, high TRL (6 to 8) HPC prototypes, like the hot water cooled heterogeneous Eurora system that was ranked in the 1st place of the green 500 in June 2013, and the most recent DAVIDE OpenPower system.

E4 COMPUTER ENGINEERING SPA (E4)

Founded in 2002, E4 Computer Engineering has been innovating and actively encouraging the adoption of new computing and storage technologies. Because new ideas are so important, E4 invests heavily in research and the success achieved up to now is a clear proof of the winning strategy. E4 has been bringing to the market a chain of innovations, like the first Infiniband cluster based on commodity processors (2005), the first ARM- based multi-node cluster connected via the Infiniband network and powered by GPU accelerators (2012), and the first liquid cooled, OpenPOWER-based, multi-node cluster connected via the Infiniband network, with on- node NVIDIA accelerators and featuring a non-intrusive equipment for high-sampling-rate power measuring and capping, co-developed by E4. Because of E4âs comprehensive range of hardware products, software stack and value-added services, E4 can propose customers and prospects with complete solutions for their most demanding workloads in: HPC, Big-Data, AI, Deep Learning, Data Analytics, Cognitive Computing and for any challenging Storage and Computing requirements. E4 Computer Engineering is an SME (Small or Medium Enterprise) according to the Horizon 2020 statuses. E4 Computer Engineering is a Private Corporation (S.p.A) engaged in an economic activity specialized in the manufacturing of high performance IT systems of medium and high range. E4âs products aim to accomplish both industrial and scientific research requirements and address the needs of universities, research centers and computing centers.

INSTYTUT CHEMII BIOORGANICZNEJ POLSKIEJ AKADEMII NAUK (PSNC)

Poznan Supercomputing and Networking Center (PNSC), affiliated to the Institute of Bioorganic Chemistry of Polish Academy of Sciences, is one of the leading research and computing centres in Poland. PNSC focuses on using, innovating and providing HPC, grid, cloud, portal and network technologies to enable advances in science and engineering. It hires around 300 people and is organised into 4 main technical departments: Applications, Supercomputing, Network Services, and Networking Department. PNSC is a coordinator of PIONIER – the Polish Optical Network and as such is a partner of the GEANT network. It also operates one of 5 national HPC centres in Poland. On top of the existing infrastructure PNSC has developed a large number of innovative tools, services and applications.

KUNGLIGA TEKNISKA HOEGSKOLAN (KTH)

The Royal Institute of Technology (KTH), established in 1827, is one of Europe’s top schools for science and engineering, graduating one-third of Sweden’s undergraduate and graduate engineers in the full range of engineering disciplines. Enrollment is about 17,500 students, of which about 1,400 are pursuing PhD studies. In this proposal, KTH is represented by the PDC Center for High Performance Computing (PDC) and the Linneo FLOW Centre. The Linneo FLOW Centre is the leading centre for fluid mechanics research in Sweden. A large part of the research is computational and there is a strong activity dealing with large-scale simulations of turbulent flows, so called direct numerical simulations. To this end, FLOW has been instrumental while acquiring a large dedicated cluster (10000 cores with full-bisectional Infiniband) 2009-2013, which allowed us to perform some of the largest turbulence simulations, both in 3D turbulent boundary layers, but also in 2D (atmospheric) turbulence. The centre comprises about 30 senior researchers within three departments at KTH, plus about 50 active PhD students. FLOW is the main developer of a fully spectral code SIMSON (parallel efficiency ~80\% up to 16384 cores), and an active contributor to the massively parallel code Nek5000. The latter method has shown excellent scaling up to 1200000 cores for real turbulence applications. PDC is the lead centre for high-performance computing for the Swedish academic community funded by the Swedish Research Council through the Swedish National Infrastructure for Computing (SNIC). PDC operates leading edge compute resources for national users, including Sweden’s flagship system “Beskow” a 2.5 PF Cray XC40 system – as well as resources for specific research groups. PDC has an extensive user support program with parallelization experts working closely with users and application providers alike to optimise their programs for HPC usage.

MAX-PLANCK-GESELLSCHAFT ZUR FORDERUNG DER WISSENSCHAFTEN EV (MPG)

The Max Planck Society is Germany’s most successful research organization. The currently 84 Max Planck Institutes and facilities conduct basic research in the service of the general public in the natural sciences, life sciences, social sciences, and the humanities. Max Planck Institutes focus on research fields that are particularly innovative, or that are especially demanding in terms of funding or time requirements. The MPG will be represented by the Max Planck Computing and Data Facility (MPG-MPCDF), which is the joint supercomputing centre of the Max Planck Society (MPG) and the Max Planck Institute for Plasma Physics (IPP) in Garching. MPCDF has a long tradition in supercomputing, starting in 1961. Expertise with applications on massively parallel computers has been collected since more than two decades. MPCDF has also a long tradition in data management, archival systems and services for large datasets with long life times, e.g. large amounts of experimental data from different science areas and from supercomputer simulations. MPCDF supports the scientists of the Max Planck society in all aspects related to computing. It operates the supercomputers of the MPG, provides remote visualization possibilities, and hosts many compute clusters of Max Planck institutes. Through HPSS hierarchical storage and general archiving facilities are provided. Specific competences of MPCDF further include multi-domain application support for high-end supercomputing systems and data services, data management, long-term archival, global file systems. MPCDF is involved in several national and international projects.